work project

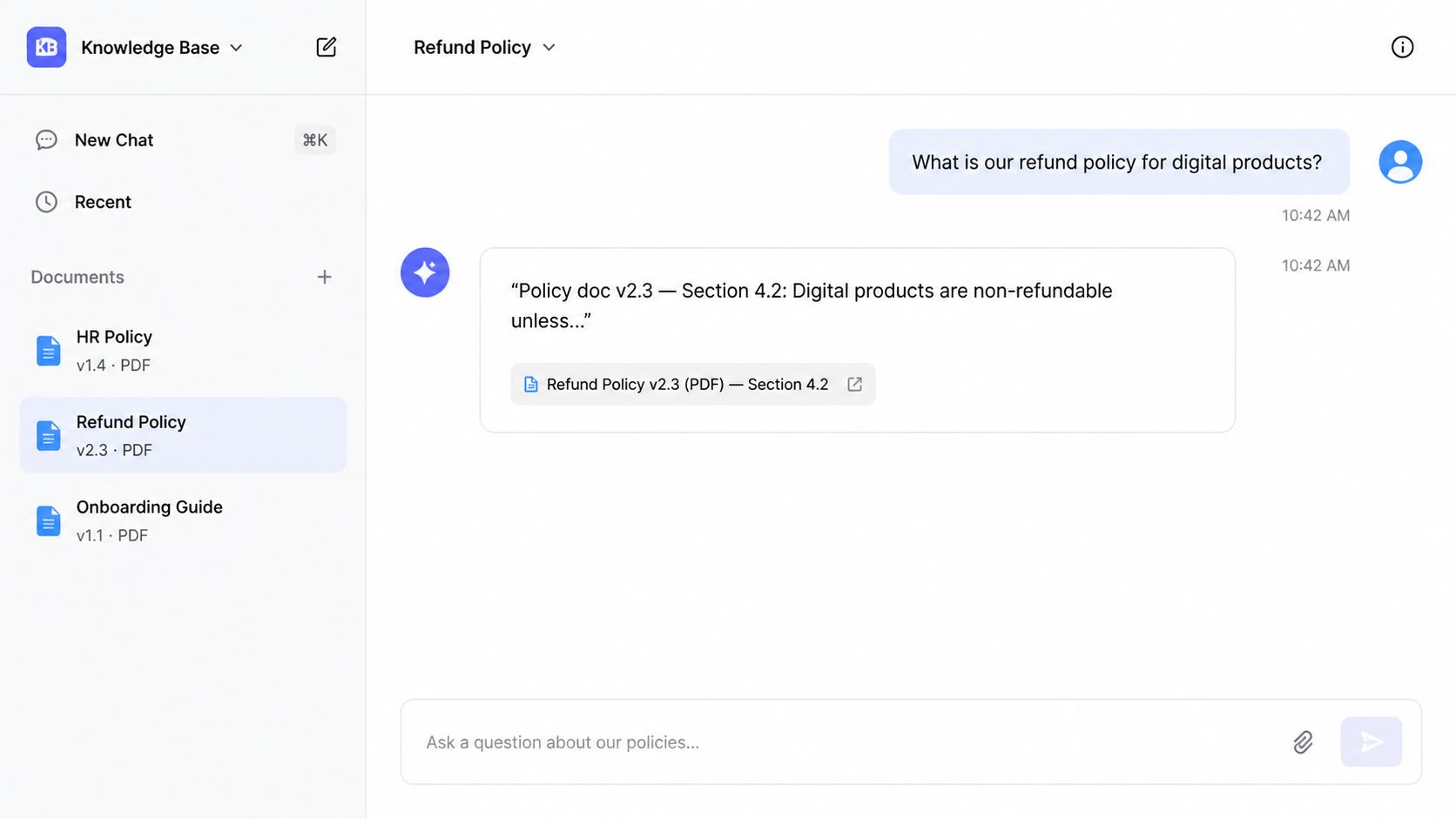

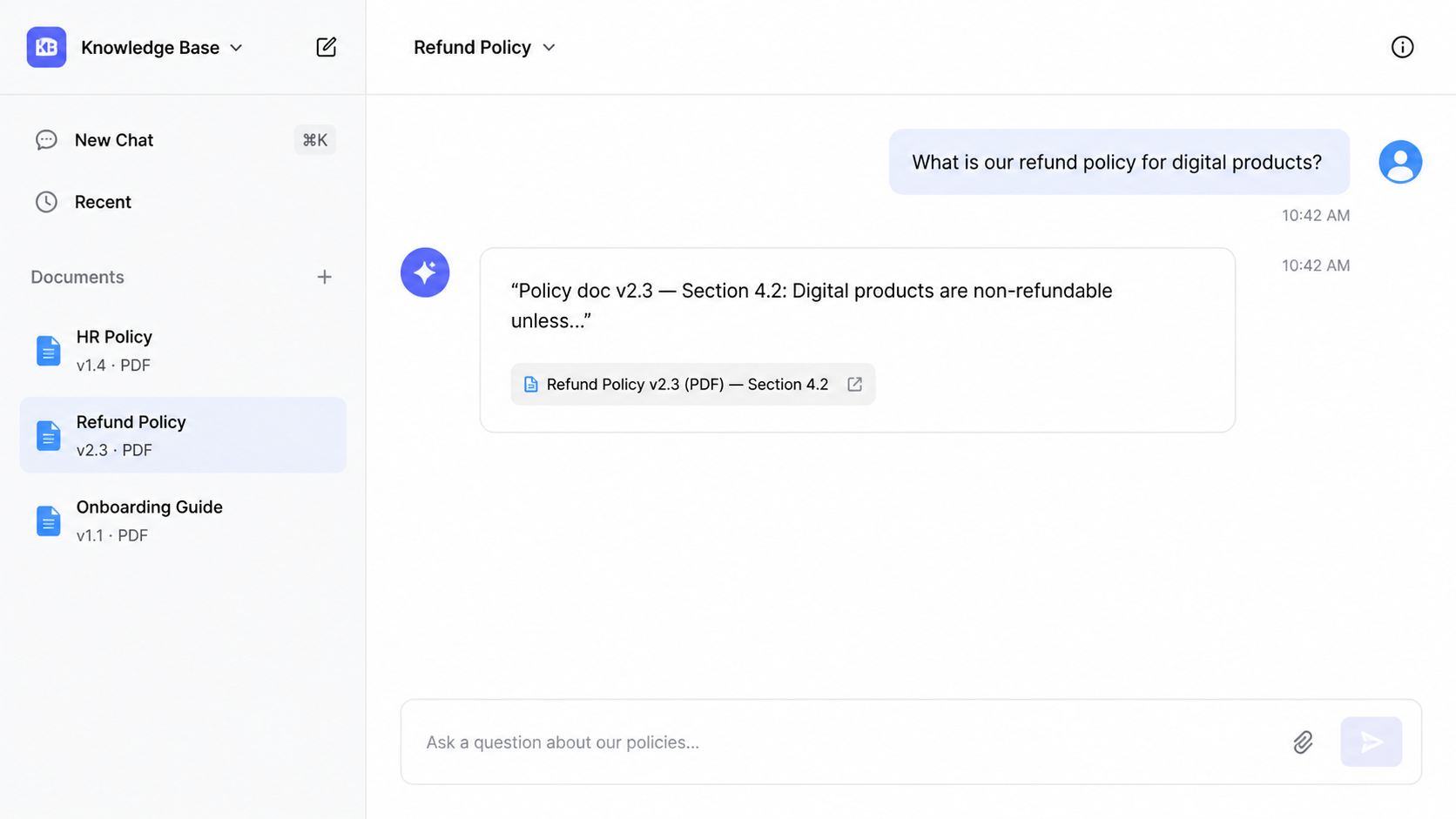

AI Knowledge Base Agent

Services

- →Document ingestion pipeline (Google Drive, Notion, PDF)

- →RAG knowledge base with hybrid vector + keyword search

- →Slack bot integration with threaded responses

- →Web interface with conversation history and source citations

- →Support-ready reply drafting mode

- →Incremental index updates on document changes

Deliverables

- ✓AI assistant indexing existing docs — no migration required

- ✓Natural language Q&A with source citations and links

- ✓Slack bot for team — answers in threads

- ✓Draft customer reply generator for support agents

- ✓Feedback loop for continuous quality improvement

- ✓Hybrid search: dense embeddings + BM25 keyword retrieval

Challenge

A growing team had accumulated three years of company documentation across Google Drive folders, a Notion wiki, and email archives. Institutional knowledge was locked in PDFs and documents that no one could find quickly. New hires spent their first weeks asking senior colleagues questions that were technically already documented somewhere. Support agents re-wrote the same response to the same customer question repeatedly because the canonical answer wasn't surfaced anywhere.

Options Considered

- Better folder structure and search — tried previously. Full-text search helped with exact keywords but failed for conceptual questions like "what is our policy on partial refunds for digital products."

- Dedicated wiki tool (Confluence, Guru) — required manual migration of existing docs and ongoing curation. Adoption dropped off after three months in a previous attempt.

- RAG-powered AI agent over existing documents — chosen. Works on documents where they already live, requires no migration, and answers questions in natural language rather than returning a link to a page the user still has to read.

Decision

The agent indexes all existing documentation — Google Drive, Notion pages, PDF uploads — into a vector database. A Slack bot and a web interface let team members ask questions in plain language. The agent retrieves the relevant document chunks, synthesises an answer, and cites the source document with a link. Support agents get a "draft customer reply" button that generates a support-ready response from the same knowledge base.

Implementation

A document ingestion pipeline syncs Google Drive and Notion via their APIs, chunks documents into overlapping segments, and embeds them using OpenAI Embeddings into a Pinecone index. The index is updated incrementally when documents change. The retrieval layer uses hybrid search — dense vector similarity plus keyword BM25 — to improve recall on named entities and product codes.

The Slack bot uses Slack's Bolt framework and responds in threads to keep channels clean. The web interface adds conversation history and a feedback button (thumbs up/down) that logs low-quality answers for prompt tuning. The "draft customer reply" mode applies a separate prompt that reformats the answer for external audiences, removes internal jargon, and adds a polite opening and close.

Outcome

New hire onboarding time for the documentation phase cut from 2 weeks to 3 days. Support team reduced average handle time on policy questions by 65%. Senior team members reported fewer interruptions from questions that were "already documented somewhere." The system answered 80% of queries without any human escalation.

Open for contract collaboration

I am available for contract-based collaboration. If you have an interesting project idea, schedule a call via Calendly.

Schedule a 30-min call